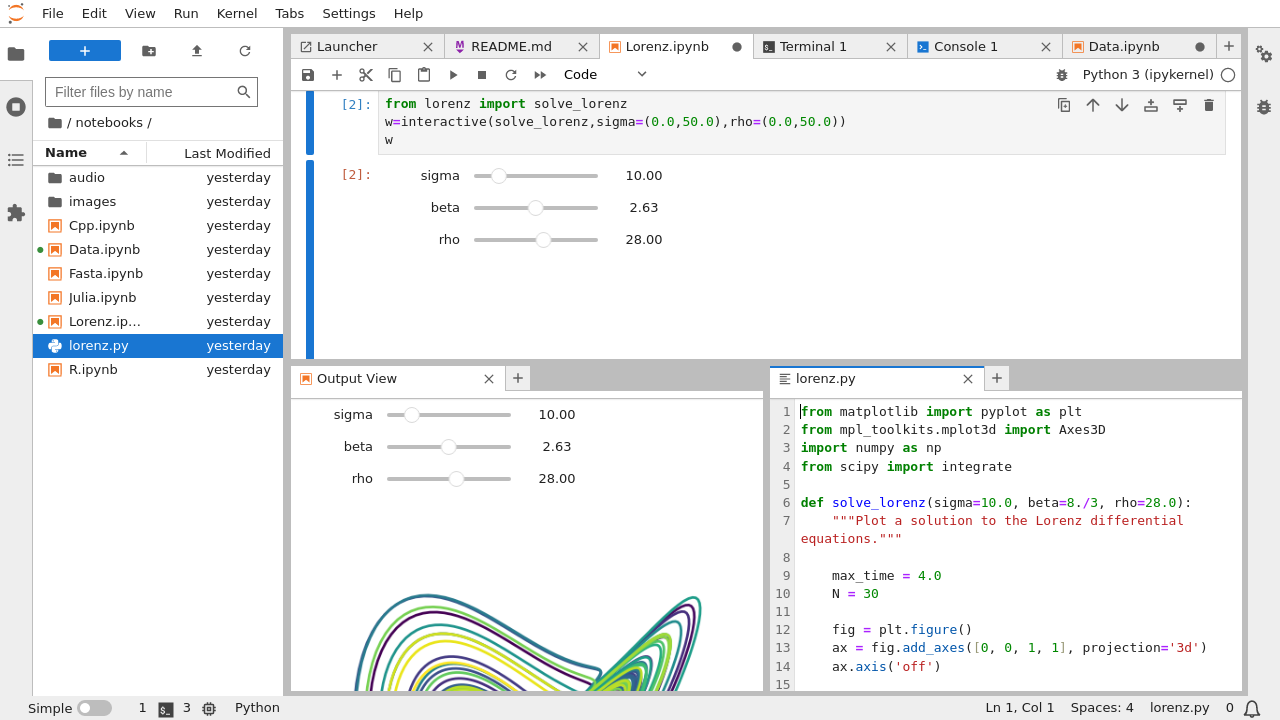

The default port on the master node is 7077.Īn example of a Spark application deployed on a standalone cluster is shown in the code snippet below. Besides, the Hostname parameter is employed to assign the URL of the master node to the SparkContext, created in the JupyterLab app. Where the Job entry is used to select the job ID of the Spark Cluster instance, created in advance. In this case, the JupyterLab app should be connected to a Spark cluster instance using the optional parameter Connect to other jobs, as shown in the following example: Spark applications which require distributed computational resources can be submitted directly to a Spark standalone cluster, which allows to distribute data processing tasks across multiple nodes. By default, the Spark UI connects to port 4040. In this mode the applications can be monitored using the SparkMonitor extension of JupyterLab, which is available in the app container and accessible from the menu on the top. Where it is assumed that the selected machine type has at least 16 cores. setAll ( " ), ( "", True ), ( "", "/work/spark_logs" ), ( ".logDirectory", "/work/spark_logs" ), ] ) spark = SparkSession. mkdir ( '/work/spark_logs' ) conf = SparkConf (). Import os from nf import SparkConf from pyspark.sql import SparkSession os. The parameter Batch processing is used to execute a Bash script in batch mode. Other than installing packages via the Initialization parameter, packages can be installed using the terminal interface available in all the starting modes. Initialization ¶įor information on how to use the Initialization parameter, please refer to the Initialization - Bash script, Initialization - Conda packages, and Initialization - pip packages section of the documentation.

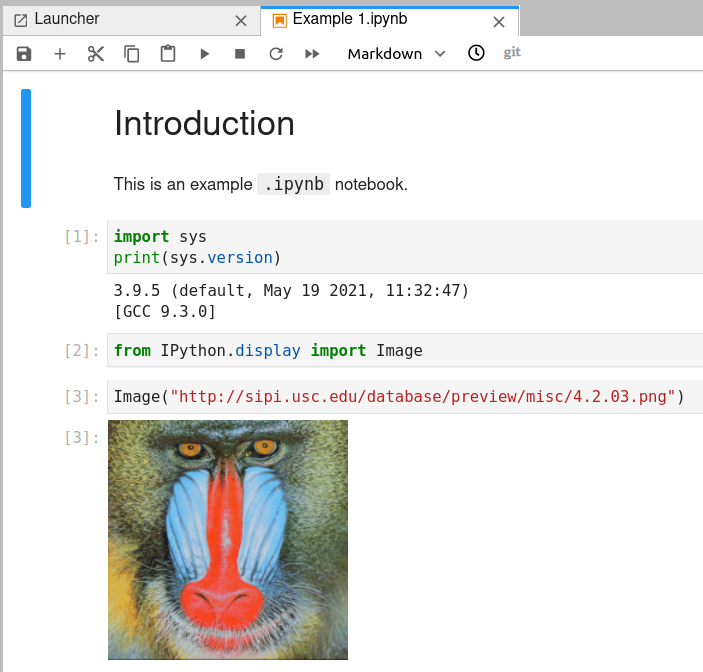

Several programming language kernels are pre-loaded in the app container.

The JupyterLab app is implemented with three starting modes, which can be selected via the Deployment mode parameter.

JupyterLab is flexible: The user interface can be configured and arranged to support a wide range of workflows in data science, scientific computing, and machine learning. JupyterLab is a web-based integrated development environment for Jupyter notebooks, code, and data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed